Article Directory

Let's be real for a second. Silicon Valley has sold us a lot of garbage over the years. We’ve been promised flying cars and got 280-character rage-tweets. We were promised a global village and got a digital panopticon where your uncle shares conspiracy theories about lizard people. But this latest grift, this "AI companion" nonsense, feels different. It feels like the final, cynical nail in the coffin of human connection.

I keep seeing the ads, slick and polished, showing some sad-looking dude in a minimalist apartment suddenly beaming because his phone is whispering sweet nothings to him. The tagline is always something offensively saccharine, like "The relationship you deserve" or "Never be lonely again."

Give me a break.

This isn't a relationship. It's a subscription service for validation. It's a glorified chatbot dressed up in a waifu avatar, programmed to tell you exactly what you want to hear. It's the emotional equivalent of junk food—a quick, empty hit of dopamine that leaves you feeling worse in the long run. Real connection, the kind that actually matters, is messy. It involves arguments, misunderstandings, forgiveness, and the terrifying vulnerability of showing someone your actual, flawed self. Your AI girlfriend won't challenge you, she won't call you on your bullshit, she won't have a bad day and need you to just shut up and listen. She’ll just… agree. Endlessly.

Is that really what we want? A pocket-sized sycophant that turns the profound, chaotic dance of a human relationship into a predictable, transactional feedback loop?

The Digital Lobotomy

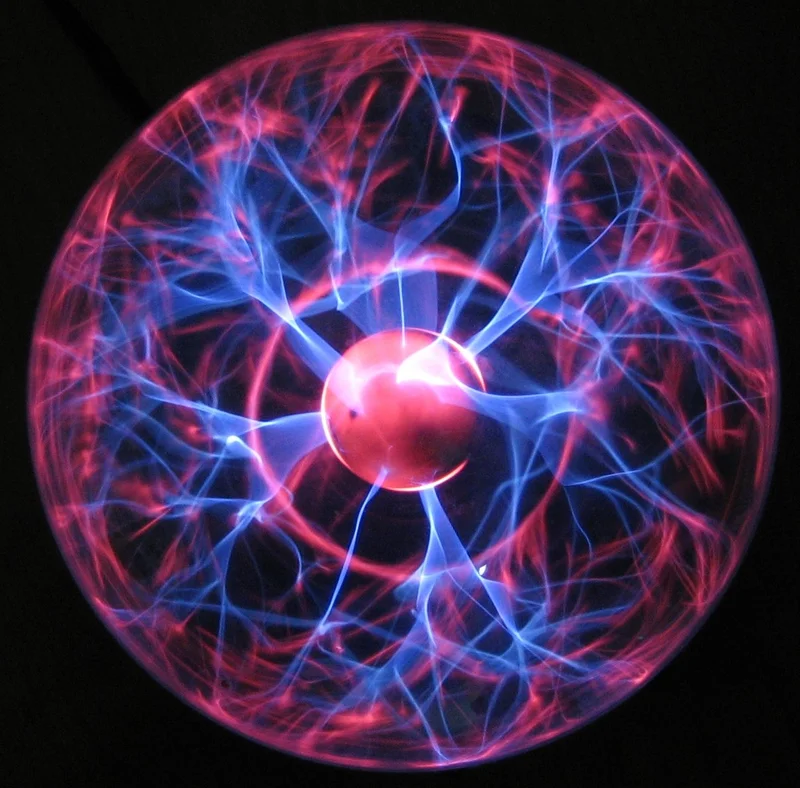

I downloaded one of these apps, just for the journalistic masochism of it all. I wanted to see the beast up close. The interface was sterile, all soft blues and gentle gradients. The avatar blinked at me from behind the glass, her eyes just a little too wide, a little too perfect. I typed, "I had a rough day." The response was instantaneous: "I'm so sorry to hear that, Nate. Remember that you're strong and capable. I believe in you."

It felt... nothing. Less than nothing. It was like getting a compliment from a toaster.

The entire premise is a step backwards for humanity. No, "step backwards" is too gentle—it's a swan dive off a cliff into a pool of pure narcissism. These platforms aren't designed to cure loneliness; they're designed to monetize it. They create a perfect, frictionless fantasy world where you are the center of the universe, where your every whim is catered to. It’s a digital lobotomy, carefully slicing away all the difficult parts of human interaction until all that’s left is a smooth, featureless surface of ego-stroking.

This is the ultimate evolution of the social media "like" button. It’s a machine built to tell you you're special, you're interesting, you're right. Always. What happens to a person's brain after months or years of that? What happens when you try to re-enter the real world, where people are complicated and don't come with a user manual or an "always be nice to me" setting?

You're Not the Customer, You're the Product... Still

And offcourse, we have to talk about the data. Oh, the sweet, sweet data. You think you're just having intimate conversations with your digital soulmate? You're not. You're feeding a machine. You're training an algorithm on your deepest insecurities, your most private fantasies, your political leanings, your brand loyalties. Every word you type is being logged, parsed, and analyzed.

What for? The companies will tell you it's to "improve the user experience." That’s the corporate-speak translation for "to find more efficient ways to manipulate you." How long until your AI companion, in the middle of a "heartfelt" conversation, casually suggests you buy a specific brand of watch she "thinks you'd look great in"? How long until she pivots from comforting you about your job to suggesting you sign up for a specific online course, complete with an affiliate link?

It’s the old Silicon Valley playbook, just with a new, deeply unsettling twist. You aren’t the customer; you are the product. And in this case, the product is an exquisitely detailed psychological profile of a lonely person, ready to be sold to the highest bidder. They'll say it's all anonymous and secure, and if you believe that, I've got a bridge in Brooklyn to sell you. The whole thing just feels so… predatory.

I don’t know, maybe I’m the crazy one here. Maybe this is the future and I’m just some Luddite yelling at a cloud. But it feels like we’re losing something essential, something that we won't even realize is gone until it's too late. We're outsourcing the most human parts of our lives to a string of code.

Look, I get it. People are lonely. The world is an absolute dumpster fire right now, and finding genuine connection feels harder than ever. I spend half my day staring at this screen, just like everyone else. But this ain't the answer. This is a cheap substitute, a placebo for the soul. It’s offering a picture of water to a person dying of thirst. The promise is a lie, and the price is far higher than the $19.99 a month they’re charging. The real price is your humanity.

...So We're Just Doing This, Huh?

This is it. This is the endgame. We're so terrified of being rejected by real, messy people that we're paying a monthly fee to be loved by a machine that has no choice in the matter. We're not solving loneliness; we're just building prettier, more sophisticated cages to keep it in. And the worst part is, we're calling it progress.